Every application running on Vapor is powered by AWS Lambda, which allows us to run code without needing to think about servers. One of the biggest benefits of this type of infrastructure is that it allows our applications to automatically scale to meet demand with little to no capacity planning.

In order to better understand how much an application can be scaled with Lambda, it’s important to familiarize ourselves with one of its core concepts - concurrency.

According to AWS, concurrency in Lambda is “the number of executions of your function code that are happening at any given time. executed simultaneously.” So given the default limit on a new AWS account is 1,000 concurrent executions, a web application can process 1,000 requests simultaneously.

This sounds simple; however, there is more than meets the eye if we keep digging.

Reserved concurrency

The concurrency limit applied by AWS is shared across all of the functions on an individual account. This means that if one function on the account is executing at the concurrency limit, other functions will be prevented from executing at the same time.

In order to mitigate this, each individual function can reserve capacity from the overall account concurrency pool. For example, to ensure there are always 100 functions ready to respond, you would set the value of “Reserved concurrency” to 100. Note that if the concurrency of this function hits the reserved limit, it will not then pull from the unreserved pool. This means 100 becomes the maximum simultaneous invocations for that function.

To interact with this setting in your Vapor project, you may update the concurrency option of your vapor.yml file in order to limit the number of HTTP requests which can be handled at any one time, and the queue-concurrency option may be updated to limit the number of queued jobs which can be processed at any one time.

For more information, you may visit the concurrency documentation for environments and queues.

Unreserved concurrency

As you may have guessed, unreserved concurrency is any concurrency remaining which has not been explicitly reserved. AWS will reserve 100 invocations for all non-concurrency reserved functions. This means it’s only possible to reserve 900 invocations across all functions on an account without requesting AWS to increase the limit.

Provisioned concurrency

When reading about serverless applications, you may have encountered the term “cold start”. A cold start is when a serverless function takes a longer time than usual to execute. It arises when a function is inactive because it hasn’t been executed recently and the cloud provider must initialize the environment before it can run. When the function has been executed once, it will remain warm for a period of time and subsequent execution time will be much faster.

Provisioned concurrency can be used to alleviate this issue by initializing a given number of execution environments and keeping them ready to respond immediately to execution requests.

There is a cost associated with utilizing provisioned concurrency. It’s also worth noting that provisioned concurrency counts towards a function’s reserved concurrency. You cannot allocate more provisioned concurrency than you have reserved.

To utilize provisioned concurrency with your Vapor project, you may update the capacity option of your vapor.yml file.

If you would like similar functionality, but without the added cost, you may want to consider at prewarming, which can also be configured from your vapor.yml file.

For more information about using this functionality in your Vapor project, you may visit the documentation for provisioned concurrency and prewarming.

Throttling

Throttling occurs when there is not enough concurrency to process the number of required executions. For example, imagine the reserved concurrency on a Lambda function is set to 10 and that Lambda function is triggered when a job is added to an SQS queue. If that queue has 10 or more jobs added to it simultaneously, there is not enough concurrency to process all the jobs and those that didn’t get processed will end up back on the queue.

The impact of throttling depends on how the function is being used. In the case of Vapor, that can be one of three things:

- HTTP Requests - When a request hits API Gateway or an Application Load Balancer, it sends the request to Lambda for processing. If the Lambda invocation is throttled, an error response will be returned from your API.

- Queued Jobs - Vapor configures SQS to trigger a Lambda function when a job is added to the queue. If the Lambda invocation is throttled, the job will be added back to the queue.

- CLI - Commands and scheduled tasks are handled by Lambda. Should the invocation be throttled, the command will fail.

Monitoring

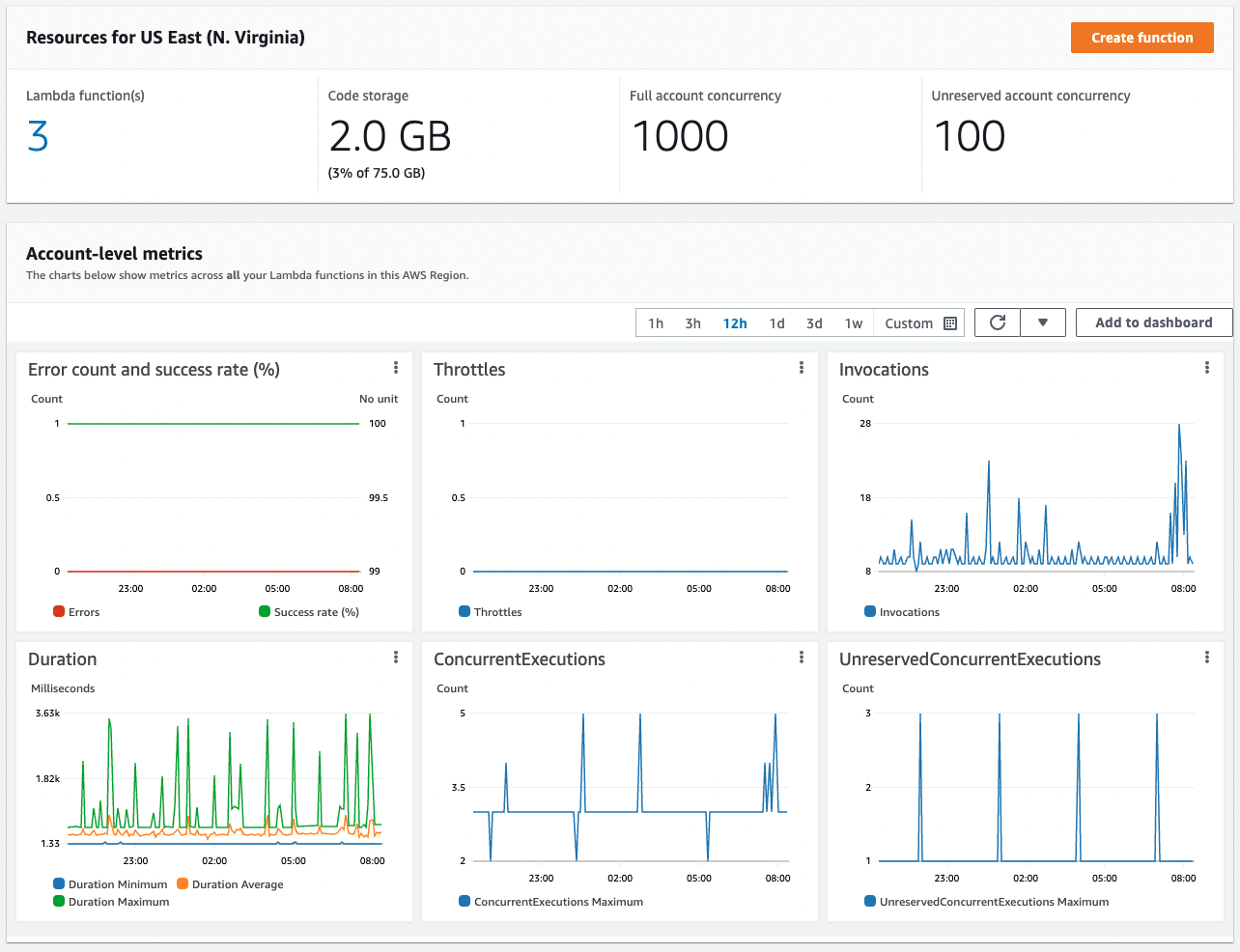

The AWS console has some great monitoring tools that allow you to see how your Vapor application is performing. To dig in, login to AWS > Navigate to the Lambda service > Click on the relevant function > Click on the Monitor tab.

This dashboard provides a multitude of metrics to help you monitor your environment, such as a log of invocations, charts showing successful invocations, throttled invocations, and invocations that returned an error.

Managing Concurrency

If you notice that your Lambda function is regularly being throttled, it may be time to consider tweaking your configuration. If there is another function on the account dominating invocations, it may be possible to limit its concurrency to free up the unreserved pool for those functions being throttled. You could consider reserving some concurrency of the function being throttled to ensure it always has the capacity it needs to run. Finally, if you have exhausted all options to successfully share the concurrency pool on your account, you may contact AWS and ask for your pool to be extended. The 1,000 concurrent invocation limit is a soft-limit which, according to the documentation can be increased to "tens of thousands".